Markerless Motion Capture: A Practical Guide

If you're looking at performance capture for a series, a game trailer, an XR activation, or a virtual production workflow, you've probably hit the same wall many teams hit. Traditional mocap can deliver excellent data, but it often asks for a lot in return. Specialised stages. Suits. Markers. Prep time. Calibration discipline. A room full of people solving technical problems before the creative work can even begin. That friction matters more than most buying guides admit. It affects actor comfort, scheduling, continuity across episodes, and how quickly directors can review a take and move on. It also affects whether motion capture becomes a practical production tool or a technical luxury that only fits a narrow set of shoots. Markerless motion capture changes that equation. Instead of attaching retroreflective markers to a performer, the system analyses video, identifies the body in motion, and reconstructs a 3D skeletal performance from the footage. In practice, that means fewer barriers between rehearsal and capture, less interruption on set, and motion that often feels more natural because performers aren't physically constrained in the same way. That shift also sits inside a wider production change. Teams are combining real-time tools, AI-assisted workflows, and faster iteration loops across animation and XR. If you're thinking about where this is going next, this roundup of actionable advice on AI trends is useful because it frames the bigger operational question, not just the headline technology. Markerless capture makes the most sense when it plugs into a broader pipeline that values iteration speed. For producers and creative leads already working with LED stages, real-time previs, or engine-based delivery, it also connects naturally to virtual production workflows used by modern creative teams.

Introduction The Future of Performance Capture is Here

A lot of teams first approach markerless motion capture as a cost or convenience question. That's understandable, but it undersells what the technology changes. The bigger difference is creative tempo. You spend less time preparing the body for capture and more time working on performance, blocking, timing, and intent. In practical production terms, that's a major shift. The old bottlenecks don't disappear completely, but they move. Instead of marker placement and suit logistics dominating the day, the pressure shifts toward camera planning, lighting consistency, solve quality, and downstream retargeting. Those are still real technical considerations, but they're usually better aligned with how a production team already thinks.

Where the pain usually sits

On a traditional stage, the slow parts often happen before the first useful take. Talent gets prepped. The capture volume is checked. Markers are placed and adjusted. If anything slips, rotates, or occludes, the cleanup arrives later in post. None of that is glamorous, and all of it costs time. Markerless motion capture reduces a lot of that physical overhead. That doesn't mean it's automatic. It means the setup burden changes from dressing the performer to designing the capture environment intelligently.

Practical rule: If the performance is the asset, remove anything that interrupts the performer unless the added precision is essential to the shot.

Why clients are paying attention now

The appeal is straightforward. Teams want realistic motion, shorter setup cycles, and a path from capture to animation that doesn't create unnecessary handoffs. For episodic content and immersive work especially, that combination is hard to ignore. Clients also want flexibility. They may need quick previs one week, engine-ready animation the next, and a polished final pass after that. Markerless systems are well suited to that kind of mixed production reality because they can support rapid acquisition while still feeding structured animation workflows.

What Is Markerless Motion Capture

At its simplest, markerless motion capture is the process of turning filmed human movement into usable 3D motion data without attaching physical markers to the performer. A useful way to think about it is this. Imagine an expert animator watching multiple camera feeds and tracing a digital skeleton over the body frame by frame, then reconstructing that movement in three dimensions. Markerless systems automate that process with computer vision, pose estimation, and skeletal solving.

What it replaces

Traditional marker-based mocap depends on visible physical points attached to the body. The cameras track those points, then software interprets them as movement through space. Markerless capture removes the physical marker stage and asks software to identify the body directly from video. That sounds like a small difference. It isn't. It changes the capture experience for performers, the prep requirements for production, and the kinds of environments where capture becomes feasible. A performer can move without adhesive markers, dedicated suits, or the same degree of physical instrumentation. For some productions, that's the main benefit. For others, the bigger gain is that the crew can move faster from rehearsal to review.

What the system is actually trying to produce

The goal isn't video analysis for its own sake. The goal is a clean, structured representation of body motion that can be retargeted onto a rig, analysed for biomechanics, visualised in real time, or fed into an engine pipeline. That means good markerless mocap isn't judged only by whether it "tracks". It has to produce motion data that is stable enough to survive the rest of production. Here are the practical outputs teams usually care about:

- •Animation-ready body motion for character rigs in Maya, MotionBuilder, Unity, or Unreal.

- •Real-time visualisation so directors and supervisors can assess whether a take is working while the performer is still on set.

- •Repeatable skeletal data that can be cleaned, edited, and integrated into a broader shot pipeline.

- •Natural movement capture where wardrobe, body language, and actor freedom matter to the final result.

Markerless motion capture isn't just a different way to collect movement. It's a different way to organise production around movement.

Where expectations should stay realistic

It's not magic, and it's not a universal replacement for every marker-based stage. Shots involving dense occlusion, complex prop contact, unusual costumes, or very specific interaction points can still be difficult. The quality of the result depends on the capture design, not just the software licence. That's why the smartest teams don't ask, "Is markerless better?" They ask, "Is markerless the right fit for this kind of motion, this delivery format, and this schedule?"

How The Technology Translates Movement to Data

On a real shoot, the handoff from performance to usable motion matters more than the buzzwords. A producer wants to know whether the system will give the animation team clean body data, whether supervisors can review takes on set, and whether the result will hold up once it hits retargeting, engine ingest, and final delivery.

Camera capture and scene coverage

The process starts with coverage. Cameras observe the performer from enough angles to give the software a reliable read of body position over time. Depending on the system, that may involve depth-aware capture or standard video cameras feeding a reconstruction pipeline. Single-camera approaches can work for lighter use cases, previs, or constrained movement. Production-grade capture benefits from multiple synchronised views because occlusion is the main enemy. If an arm disappears behind the torso from one angle, another camera may still see it clearly enough to keep the solve stable. As noted earlier, some markerless workflows also support 2D video input from standard cameras and higher-end multi-camera arrays within one coordinated solve pipeline. At Studio Liddell, this is usually the first practical discussion with a client. The question is not just how many cameras fit in the room. It is whether the shot design, performer count, wardrobe, and intended output justify a tighter capture volume or a wider one. Better coverage raises confidence, but it also increases calibration, data handling, and review overhead.

Pose estimation and skeletal solving

Once footage is captured, the software identifies the performer and tracks body landmarks frame by frame. It estimates joint locations, infers limb orientation, and builds a skeletal solve that can be reviewed in preview before it moves deeper into the pipeline. The term "AI" gets used loosely here. What matters in production is whether the system holds together through turns, crossings, changes in speed, and partial occlusion. A good solve is not just visually plausible. It is consistent. If hip rotation jitters, shoulders pop, or hands drift during overlap, those problems spread fast into cleanup and retargeting. Broadcast and XR teams feel that cost immediately because review cycles are short and downstream departments have less tolerance for unstable motion than sales demos suggest.

From detected joints to usable animation data

After pose estimation, the system reconstructs a 3D skeleton in a shared space, then prepares it for use. That usually means filtering noise, resolving foot contact, standardising hierarchy, and retargeting the motion onto the destination rig or runtime skeleton. This is the point many generic explainers skip. Capture quality is only half the job. The other half is whether the data behaves properly in Unreal, Unity, Maya, MotionBuilder, or a custom real-time pipeline without introducing days of avoidable cleanup. In studio terms, three factors decide whether markerless data is production-ready:

- Camera layout defines the ceiling. Weak angles and inconsistent coverage create ambiguity that software cannot fully repair later.

- Movement design affects solve stability. Fast rotation, self-occlusion, floor contact, and handheld props all increase interpretation errors.

- Rig prep determines how much value you keep. Clean capture can still fail in retargeting if bone orientation, hierarchy, or scale conventions are not aligned early.

The software does not rescue a poorly designed capture volume. It reveals the weaknesses in it.

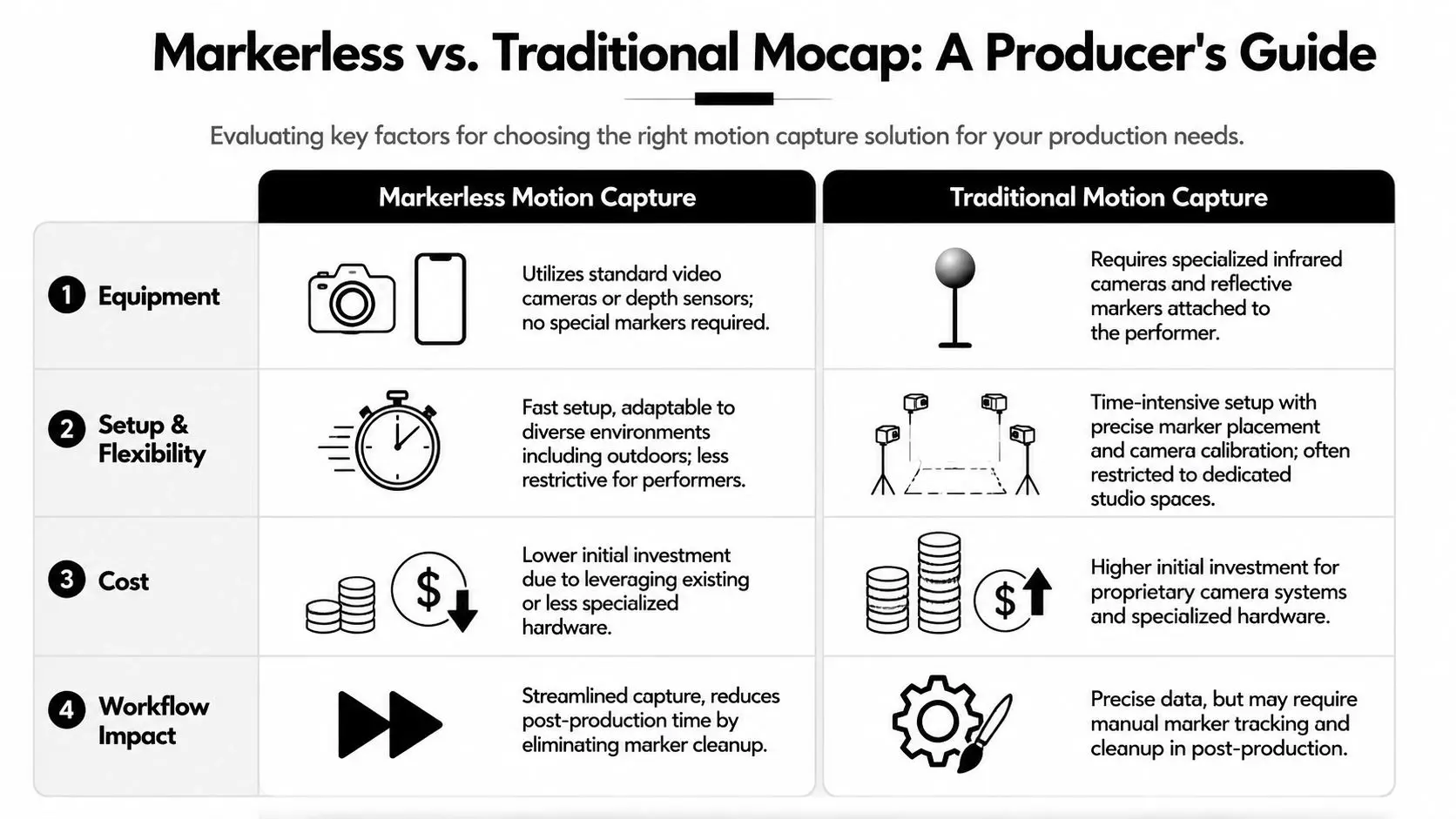

Markerless vs Traditional Mocap A Producer's Guide

The key buying question isn't which system sounds more advanced. It's which system protects the schedule, serves the performance, and delivers data the animation team can trust.

Accuracy and setup are no longer the old trade-off

A lot of outdated advice still assumes that markerless capture is convenient but substantially less precise. That isn't a safe assumption anymore. Markerless systems can achieve sub-centimetre positional accuracy, below 1cm, and angular precision of 3°, with output that is described as comparable to traditional marker-based systems while removing the need for retroreflective markers, according to technical guidance on markerless motion capture accuracy. That matters because it changes the conversation from "precision versus speed" to "where do we need precision, and what kind?" For many body performance tasks, the question isn't whether markerless can work. It's whether the production is prepared to use it well.A side-by-side production view

| Production factor | Markerless motion capture | Traditional marker-based mocap |

|---|---|---|

| Performer prep | No retroreflective markers on the body | Requires markers and physical prep |

| Setup pace | Faster to get talent moving because marker application is removed | More time goes into suit and marker workflow |

| Actor freedom | Strong fit for natural, unencumbered movement | Can feel more controlled and stage-managed |

| Capture environment | Flexible, but highly dependent on camera design and visibility | Controlled studio setups tend to be more predictable |

| Data behaviour | Strong for many performance tasks, but quality depends on occlusion management | Established and dependable for tightly controlled capture |

| Post concerns | Less marker-related cleanup, but still needs solve review and retargeting checks | Marker tracking and cleanup can add labour |

| Best fit | Episodic performance, previs, XR, rapid iteration | Precise interaction-heavy work and deeply controlled shoots |

What usually works best

For dialogue-heavy scenes, broad body performance, previs, or stylised character work where the actor needs freedom, markerless capture is often the more practical choice. It removes friction at exactly the point where directors want flexibility. For very technical scenes with complex physical interactions, contact-sensitive props, or a need for highly controlled repeatability, traditional marker-based stages still have a clear role. That's especially true when the shot depends on exact interaction beats that must line up reliably across multiple takes. A producer should weigh four questions before choosing:- •What is the deliverable really asking for? A realistic body pass for animation isn't the same as dense technical interaction with props.

- •How much iteration will happen on the day? If the director wants to explore, markerless often supports that rhythm better.

- •Where is the tolerance for cleanup? Fast capture only helps if downstream teams aren't overwhelmed later.

- •Who is operating the pipeline? The same system can feel efficient or chaotic depending on the crew running it.

What doesn't work in practice

What fails most often isn't the technology category. It's the mismatch between method and brief. Markerless capture struggles when teams assume "no markers" means "no discipline". You still need clear camera lines, consistent conditions, and a sensible retargeting plan. Traditional mocap struggles when teams over-invest in a technically perfect setup for work that really needed speed, actor comfort, and earlier creative review. The best production decisions come from identifying where the expensive mistakes would happen. On some projects that's on set. On others it's in cleanup, rigging, or engine integration.

Real-World Production Pipelines and Integrations

A markerless session only earns its keep if the data moves cleanly into the rest of production. Capture is just the front end. Actual work starts when the performance has to survive editorial review, rig retargeting, animation polish, and engine delivery.

A practical studio workflow

A typical markerless pipeline looks something like this:

- Capture planning

- Acquisition on set

- Initial solve and review

- Cleanup and filtering

- Retargeting

- Integration into DCCs or engines

Where the pipeline usually gets difficult

The hard part isn't proving that markerless capture can track a person. The hard part is keeping the workflow stable when you need real-time feedback, multiple cameras, and production-ready output at once. Research confirms that multi-camera markerless motion capture can reach high accuracy, but offers limited guidance on how to optimise camera placement, frame rates, and processing pipelines for live broadcast or interactive media. That production gap is called out in this research discussion of real-time optimisation challenges in markerless setups. For teams building content in Unity or Unreal, that gap matters immediately. You aren't just solving for body motion. You're solving for latency, operator usability, render synchronisation, and whether a director can make decisions without waiting for a long technical turnaround.If your use case is live or near-live, the bottleneck usually isn't capture accuracy. It's the total time between movement and confident decision-making.

Unity, Unreal, and handoff discipline

Once the body data is clean enough, integration becomes a pipeline issue, not a mocap issue. Skeleton naming, rig compatibility, root motion conventions, and animation compression settings all affect how useful the result is. Teams often benefit from understanding the wider virtual production equipment choices that shape creative studio workflows, because markerless capture doesn't live in isolation. It interacts with tracking, render systems, stage review, and delivery tools. A reliable handoff usually depends on a few habits:- •Lock the target rig early. Late rig changes create avoidable retargeting problems.

- •Test representative motion first. Don't validate a pipeline with an easy walk cycle if the brief involves turns, crouches, or contact.

- •Review in the destination context. Motion that feels fine in a solver viewport may read differently in-engine.

- •Separate creative approval from technical approval. A good performance can still need technical fixes before it's ready to ship.

Applications Driving Innovation Across Industries

Markerless motion capture matters because it opens up workflows that used to be too slow, too intrusive, or too stage-dependent for many projects. Its value changes depending on the sector, but the pattern is the same. Teams get access to believable human movement with fewer barriers between capture and use.

Broadcast and episodic animation

For children's series, stylised TV projects, and digital shorts, body performance often needs to feel expressive without becoming technically over-engineered. Markerless capture is useful here because it helps teams collect natural movement quickly, then shape it for the final character style. That doesn't mean every shot should be direct mocap-to-final. In many broadcast workflows, the captured motion serves as a strong base layer. Animators still adjust silhouette, timing, weight, and exaggeration to fit the show's visual language. The gain is speed at the blocking and performance stage, not the removal of animation craft.

XR and immersive experiences

XR projects benefit for a different reason. Interactivity changes the value of motion data. The movement may need to work across looping behaviours, reactive characters, or real-time avatar systems rather than a locked linear scene. In that context, markerless capture can help teams prototype quickly. A performer can test gestures, reactions, and movement states without the same studio overhead that a more traditional mocap day might require. That suits rapid iteration in immersive installations, event experiences, and engine-driven applications where behaviour design evolves alongside visual production.

Some of the best uses of markerless capture aren't about replacing keyframe animation. They're about getting to the right motion language faster.

Training, analysis, and simulation

Outside entertainment, the technology has clear value in motion analysis and simulation. Because the performer isn't covered in physical markers, the captured movement can feel closer to ordinary behaviour. That's useful when the goal is to observe how someone moves in a more natural state. For educational simulations, biomechanics-informed visualisation, or technical training content, that can be a strong advantage. The body data becomes a bridge between real motion and digital explanation.

Brand content and digital humans

Corporate communications, product storytelling, and digital presenter formats are also pushing this area forward. Brands increasingly want character-led or avatar-based communication that doesn't feel stiff. Markerless capture supports that by giving teams a workable performance base without turning a simple content session into a specialist lab booking. The strongest results usually come when the team chooses the right level of realism. Not every avatar needs fully photoreal motion. Often the better creative choice is a stylised performance that preserves natural timing and intention while fitting the brand world.

Evaluating Cost Accuracy and Studio Partners

A producer usually reaches this point after the early excitement wears off. The tests look good, the creative team can see the potential, and then the practical questions land. What will this cost in real production terms, how accurate does it need to be for the brief, and who can run it without creating avoidable cleanup later? There is no single market rate that tells the whole story, especially for broadcast, XR, and mixed engine pipelines where the capture session is only one line in the budget. Accuracy also needs context. A stylised real-time avatar, a digital presenter for broadcast graphics, and a hero character for close-up animation do not carry the same tolerance for foot slip, finger detail, or retargeting error. At Studio Liddell, that difference usually matters more than any headline promise about AI capture quality. The useful question is not "What does markerless mocap cost?" It is "What does this project need from capture, and what will it take to get reliable motion into the final pipeline?"

What to price properly

Teams often underestimate the parts of the budget that sit after the shoot. The camera setup and software licence matter, but so do solve review, motion cleanup, retargeting, rig adjustment, and engine testing. If those stages are not priced early, the quote can look efficient right up until the delivery schedule starts slipping. A practical cost review usually covers four areas:

- •Capture setup

Camera coverage, stage constraints, lighting control, calibration time, and whether the performance includes difficult actions such as floor work, fast turns, props, or performer interaction.

- •Processing and solving

The time needed to review takes, reject weak data, rerun solves, and prepare motion that animation or technical teams can use.

- •Pipeline integration

Retargeting to the destination rig, validation in Unreal or Unity, file interchange, naming conventions, and handoff into the wider production workflow.

- •Operator experience

The people running the session affect quality as much as the toolset. An inexpensive setup becomes costly if the team cannot diagnose bad solves or protect the downstream pipeline. That last point gets missed a lot. A markerless session can be cheaper than a traditional volume for the right brief. It can also become more expensive if the project demands heavy cleanup, precision hand interaction, or repeated technical fixes because the capture plan was too optimistic. The savings are real, but they only hold if the capture specification matches the delivery target.

Accuracy is a production question, not a brochure question

Accuracy should be judged against the shot, the rig, and the review standard. For previsualisation, live XR content, and many presenter-driven experiences, markerless capture can be more than adequate and much faster to deploy. For body mechanics that need to hold up under close inspection, or for performances with frequent occlusion and complex partner contact, the margin for error gets tighter. That is why we scope from the output backwards. If the final asset lives inside a real-time graphics pipeline, we test for stability in engine. If the brief is character animation for polished delivery, we assume some cleanup and build that into the schedule and budget from day one.

Why the studio partner changes the result

The tool matters. The studio matters more. A good partner does not just book a capture day. They define what "usable" means for your project, choose a capture method that fits that standard, and account for the fixes that are likely to appear later. That is where weak supplier selection shows up. Not in the sales deck, but in retargeting delays, unusable takes, and last-minute compromises when the director starts reviewing motion in context. Here is the difference in practice:

| Decision area | Weak approach | Strong approach |

|---|---|---|

| Project scoping | Treats every brief as a generic mocap job | Matches capture choices to the character, output format, and review process |

| Budget planning | Quotes for the session only | Prices cleanup, retargeting, testing, and revisions |

| Technical setup | Focuses on software features | Plans the full route from performance to final delivery |

| Risk control | Assumes edge cases will be fixed later | Flags likely failure points early, including occlusion, rig mismatch, and engine review issues |

The cheapest quote often shifts cost into post. For clients comparing suppliers, the best questions are operational ones. Who reviews solve quality during the session? What happens when a take fails? How is motion validated on the destination rig? Who owns the handoff between capture, animation, and engine integration? Clear answers here are usually more useful than any feature list. If you are weighing broader vendor capability as well as mocap expertise, our guide to choosing an animation and visual effects studio gives a practical framework for comparing partners beyond the pitch. If you're weighing markerless motion capture for animation, broadcast, games, or XR, Studio Liddell can help you scope the right approach for the work, not just the technology. Book a production scoping call to assess feasibility, pipeline fit, and the most practical route from capture to delivery.