A Producer's Guide to Artificial Reality Games

Ever thought about a game that doesn't just live on a screen but actually spills out into the world around you? Or one that pulls you into a completely different reality? That's the essence of artificial reality games (ARGs), a broad term for immersive experiences that blend our physical world with digital ones to create truly unforgettable audience engagement.

What Are Artificial Reality Games

Artificial reality games aren't tied to a single piece of tech. Think of the term as a catch-all for interactive applications that play with our perception of reality. These experiences can either overlay digital information onto our real-world view or drop us into an entirely new, completely digital environment. For producers and brand managers, this is a category worth paying attention to. It’s moved well beyond simple entertainment and is now a powerful tool for business, training, marketing, and communicating complex technical ideas.

The Spectrum of Reality

To really get your head around artificial reality games, it helps to see them on a spectrum. The most well-known points on this spectrum are Augmented Reality (AR) and Virtual Reality (VR), with Mixed Reality (MR) acting as the bridge between them.

- •Augmented Reality (AR): Picture AR as a magic window on the world. It layers computer-generated information, like text, images, or 3D models, onto your view of your physical surroundings. A prime example is Pokémon GO, where digital creatures appear on your phone screen as if they’re right there in the park with you. For brands, this could mean an AR app for an exhibition stand that lets visitors see a full-scale digital product demo.

- •Virtual Reality (VR): VR, on the other hand, is like stepping through a portal. It completely shuts out the real world and replaces it with a fully digital one, usually viewed through a headset. This technology creates a powerful sense of presence, making you feel like you are truly there. It's perfect for deep immersion in games, medical and training simulations, or narrative-driven short films.

The umbrella term that covers all of this, AR, VR, and everything in between, is Extended Reality (XR). This term neatly wraps up any real-and-virtual combined environment and the human-machine interactions that come with it. To help clarify how these technologies fit together, here's a quick breakdown:

AR vs VR vs XR: A Quick Comparison

This table breaks down the core differences between Augmented, Virtual, and Extended Reality to clarify their unique roles in artificial reality games.

| Technology | Core Concept | User Experience | Example Application |

|---|---|---|---|

| Augmented Reality (AR) | Overlays digital information onto the real world. | Interacts with the real world through a device like a phone or smart glasses. | Pokémon GO, IKEA's furniture placement app, an AR product configurator for retail. |

| Virtual Reality (VR) | Creates a fully immersive, completely digital environment. | Replaces the real world with a virtual one, viewed through a headset. | A flight simulator for pilot training, a fantasy game like Half-Life: Alyx, a location-based VR (LBVR) game. |

| Extended Reality (XR) | An umbrella term for all immersive technologies. | Encompasses the full spectrum from AR to VR and everything in between. | A marketing campaign that uses both AR filters and a VR experience. |

While each technology has its own strengths, they all share the goal of creating a more engaging and memorable experience than what's possible with traditional media.

An ARG uses technology to create a new layer of interaction over our existing world. Whether it’s augmenting a physical space or creating a new virtual one, the goal is to create a more engaging, memorable, and effective experience than traditional screen-based media can offer.

Beyond Gaming and Into Business

Don't let the word "games" fool you; the applications for this technology are profoundly business-focused. An AR app, for instance, could let a customer at an exhibition see a full-scale virtual model of a huge piece of machinery right there on the showroom floor. A VR simulation can train a surgeon on a delicate procedure in a completely risk-free environment, ensuring compliance and accuracy. These experiences work because they engage people on a much deeper, more physical level. For a closer look at how these worlds are combined, our complete guide to what is mixed reality provides more context. As we'll get into, this tech isn't just about creating a "wow" moment, it's about delivering real, measurable business results, from higher audience engagement at events to better knowledge retention in corporate training.

The Technology Behind Immersive Worlds

So, what exactly brings the digital realities of an artificial reality game to life? It all comes down to a powerful combination of real-time game engines, smart software, and responsive hardware working together to create believable, interactive worlds. For any decision-maker planning a project in this space, getting to grips with these components is the first crucial step. The market for this technology is certainly growing at a breakneck pace. In the UK alone, the immersive virtual reality market hit USD 1,239.9 million in revenue and is on track to reach a staggering USD 5,254.1 million by 2030. This growth is being driven by software innovations that are making incredibly complex and immersive experiences more accessible than ever before.

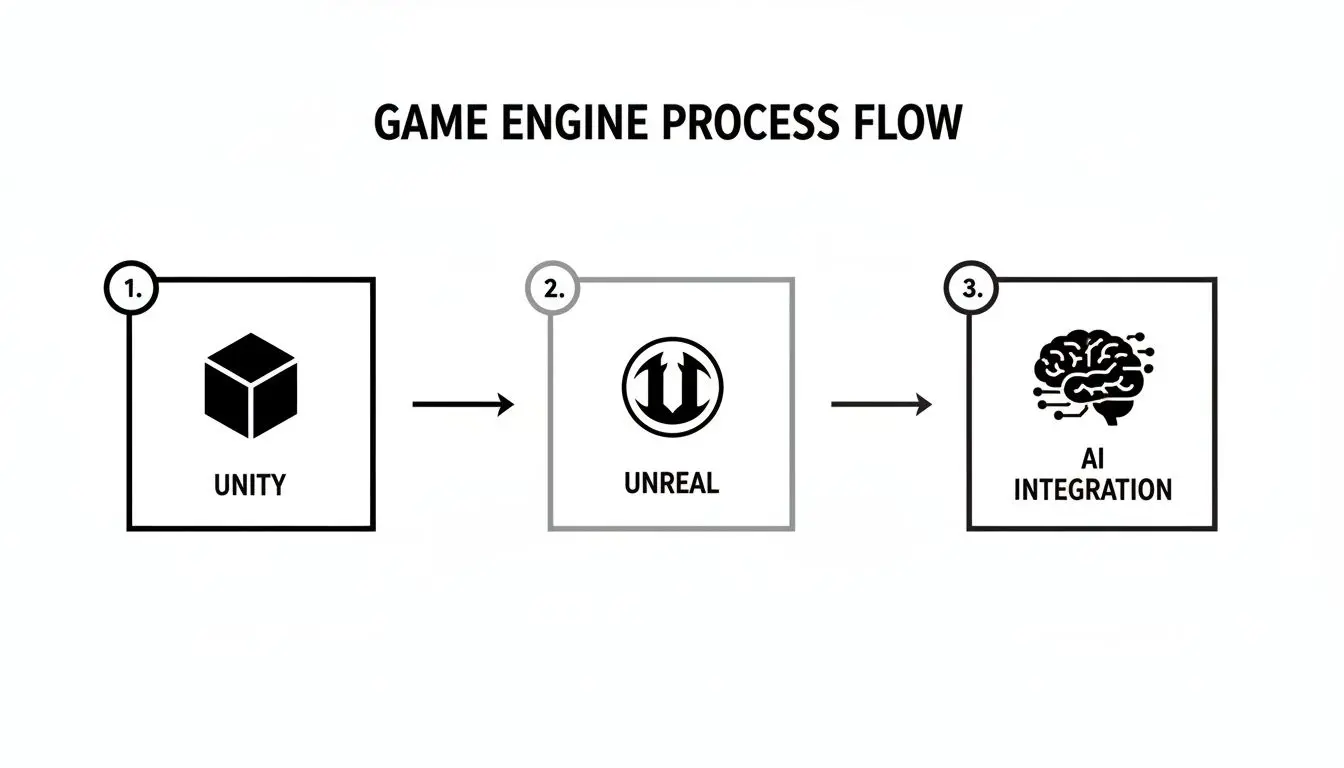

Choosing Your Engine: Unity vs Unreal

At the heart of nearly every artificial reality game, you’ll find one of two industry-leading real-time game engines: Unity or Unreal Engine. While both are fantastic tools, they each have their own unique strengths that make them a better fit for different kinds of projects. A key decision for any producer is understanding which engine best suits their goals for render quality, team skills, and timeline. Think of an engine as the digital "factory" where your world is built. It’s the foundation that provides all the core physics, rendering power, and interaction frameworks you need to bring an experience to life.

- •Unreal Engine: If your main goal is jaw-dropping, photorealistic visuals, Unreal is often the front-runner. Its rendering pipeline is famous for producing cinematic-quality graphics, making it perfect for high-impact brand showcases, architectural visualisations, or games where visual fidelity is absolutely everything.

- •Unity: Unity’s biggest advantage is its incredible flexibility and cross-platform support. It’s a master at deploying experiences across a huge range of devices, from everyday mobile phones for AR apps to high-end VR headsets like the Meta Quest. This makes it the ideal choice for projects that need to reach the widest possible audience, especially in VR production where Quest optimisation is key.

The decision isn't just about how good it looks; it's a strategic choice. It affects your development workflow, the skills your team needs, and where you can ultimately deploy your experience. To get a better sense of this, have a look at our guide on mastering Unity development for business and XR experiences.

Choosing an Engine: A Quick Analogy Unreal Engine is like a high-end film studio, perfectly equipped for creating visually stunning blockbusters with a real cinematic flair. Unity is like a versatile, all-purpose workshop, capable of building anything from an intricate museum model to a mass-produced product for global distribution.

The Role of AI in Creating Living Worlds

Modern artificial reality games depend heavily on Artificial Intelligence (AI) to make their worlds feel alive and dynamic, not just like a static, scripted movie set. AI isn’t just a buzzword here; it's a set of practical tools we use to solve tricky production problems and make the user’s experience far more engaging. You can really see AI's influence in a few key areas:

- Smarter Characters: AI is what drives the behaviour of non-player characters (NPCs) in both entertainment and training. In a VR training simulation, AI-driven characters can react realistically to a trainee's actions, creating more believable and effective learning scenarios. In character animation, this translates to more lifelike performances.

- Workflow Optimisation: On the production side of things, AI-powered tools can automate a lot of the repetitive, time-consuming jobs like creating different versions of an asset or optimising 3D models. This frees up our creative teams to focus on the details that really matter, which helps speed up development and keep costs down.

- Procedural Generation: For truly massive worlds, AI can be used to procedurally generate environments, quests, and other content. This not only ensures a fresh experience for users every time they jump in but also lets us build vast digital spaces that would be practically impossible to create by hand.

Hardware: From Seeing to Feeling

Finally, the hardware is what makes the whole experience tangible. While the engine builds the world, the hardware is the user's gateway into it. High-resolution VR headsets provide the stunning visual immersion, but it's the addition of other peripherals that elevates an experience from simply 'seeing' to truly 'feeling'. Take haptic feedback suits and gloves, for example. These use targeted vibrations and force feedback to simulate the sensation of touch. This makes interactions feel far more physical and real, something that's absolutely critical for skill-based training simulations and deeply immersive entertainment. By engaging more of the user's senses, the experience becomes vastly more memorable and effective.

Your Production Pipeline for Artificial Reality Games

So, how does a great idea evolve into a fully-fledged immersive experience? It all comes down to a clear, well-managed production pipeline. This is our roadmap, the process that turns your business goals into a polished application that works, keeping your project on time and on budget. Everything kicks off with concept and discovery. This is where we sit down together to really nail down your main objectives, figure out who your audience is, and set the key performance indicators (KPIs) that will define success. A solid brief at this stage is the bedrock of the entire project. From there, we move into pre-production. This is the creative blueprinting phase, where we map out the user’s entire journey with storyboards and pre-visualisation (previz). We plan every interaction and detail before a single line of code is written, which ensures the core experience feels right from the very beginning. For a process like this, adopting agile methods can make a world of difference. You can learn more about effective agile game development project planning to keep things flexible and responsive.

The Core Production Phases

With the blueprint signed off, the main production cycle begins. This is where we build the world, bring characters to life through rigging and animation, and the experience truly starts to take shape. It’s a process that blends pure creativity with deep technical skill.

- •Asset Creation: Our artists get to work creating all the sights and sounds of your world. This covers everything from 3D models, textures, and environments to character animations, sound effects, and music. The process often moves from previz to final lighting and compositing.

- •Development: Our developers take all those assets and bring them into the game engine of choice, usually Unity or Unreal. They build the game mechanics, code the interactions, and implement the logic that makes it all work.

- •Rigorous QA Testing: Throughout the entire development process and especially before launch, the project goes through intense Quality Assurance (QA) testing. We hunt down bugs, check performance on different devices, and polish the user experience to guarantee a flawless final product for broadcast or public deployment.

The game engine is the heart of this process, acting as the central hub where all the art, sound, and code come together. AI is also playing an increasingly important role here.

As you can see, both Unity and Unreal Engine are the core platforms we build upon, with AI tools now helping us to work smarter and create more dynamic content.

The Art of Digital Choreography

Designing for an immersive world needs a completely different way of thinking about User Experience (UX). It’s not about clicking buttons on a screen; it’s about guiding someone through a three-dimensional space. We have a term for this: digital choreography.

Digital choreography is the art of designing intuitive interactions and guiding a user's attention within an immersive space to create a comfortable, engaging, and effective experience. It’s how we prevent motion sickness and make the virtual world feel natural.

This means we have to carefully choreograph every part of the user’s sensory experience. We think about how people will move, where their eyes will naturally look, and how they’ll interact with virtual objects. For example, in a VR training sim, we might use a subtle audio cue to draw attention to a vital switch, or design an interface that feels physically satisfying to use. The aim is to make the technology itself disappear, letting the user get completely lost in the experience. Getting this wrong can lead to frustration or, even worse, motion sickness, which can ruin an otherwise brilliant application. That’s why our focus on preventing motion sickness through stable frame rates and clever interaction design is so critical. When we master this digital choreography, the final product isn’t just impressive, it’s genuinely comfortable and a joy to use.

Driving Business Results with Immersive Experiences

Sure, the 'wow' factor of immersive tech is great, but for any serious business, the real question is about results. Artificial reality games aren't just novelties; they are powerful strategic tools we design to solve specific commercial problems and deliver a measurable return on investment (ROI). It's about moving past the spectacle to create experiences that hit concrete business goals. This focus on results-driven content is exactly what's driving market growth. The UK's AR and VR market, which underpins these games, reached USD 1.8 billion in 2024 and is expected to climb to USD 6 billion by 2033. This growth is heavily fuelled by demand in sectors like gaming, education, and specialist training, where VR simulations have been proven to cut learning curves by up to 30%. You can get the full picture on these market trends from IMARC Group.

Boosting Engagement in Marketing and Events

For marketing teams, artificial reality games are a way to build deep, memorable connections that passive advertising just can't touch. A well-designed VR game or an interactive AR filter for retail creates an emotional bond that boosts brand recall and loyalty long after the event is over. These are the kinds of experiential marketing activations that get people talking. Think about the classic challenge of grabbing attention at a crowded exhibition. An AR app can turn a simple product display into a captivating interactive demo.

- •Increased Dwell Time: Visitors who might have walked straight past are drawn in, spending much more time at your stand.

- •Qualified Lead Generation: The experience can be designed to capture user details or pre-qualify their interest, turning passing curiosity into solid sales leads.

- •Memorable Product Showcases: Instead of just playing a video, you let potential clients virtually interact with, customise, or see your product at full scale.

Enhancing Training and Skill Development

When it comes to training and education, immersive experiences offer a safe, cost-effective, and endlessly repeatable learning environment. This is especially useful for complex or high-risk tasks where real-world practice is either impractical or downright dangerous. In a medical or technical VR training simulation, mistakes become learning opportunities, not expensive accidents.

VR training shifts learning from just theory into actual practice. It lets trainees build muscle memory and decision-making skills in a controlled space, which leads to dramatically better retention and on-the-job performance.

For instance, a VR simulation can train medical staff on a new surgical procedure or walk engineers through maintaining complex machinery. The results aren't just anecdotal; they're backed by data. We can embed assessment rubrics to measure performance metrics, completion times, and error rates right inside the simulation, giving you clear evidence of skill improvement and compliance.

Measuring Your Return on Investment

Crucially, the success of an artificial reality game isn't a matter of opinion, it can be measured with clear metrics tied directly to your business objectives. Defining these KPIs right from the start is a vital part of our production process, as it ensures the final experience is built to deliver results. Here are some of the key metrics we focus on:

| Business Sector | Key Performance Indicators (KPIs) | Desired Outcome |

|---|---|---|

| Marketing & Events | User engagement time, lead conversion rates, social shares. | Increased brand awareness and a healthier sales pipeline. |

| Training & Education | Skill retention scores, error reduction rates, task completion speed. | A more competent and compliant workforce. |

| Retail & E-commerce | "Try-before-you-buy" usage, add-to-cart rates, reduced returns. | Higher customer confidence and increased sales. |

By zeroing in on these measurable outcomes, we turn a brilliant creative idea into a strategic business asset that pays for itself. Our goal is always to deliver an experience that not only captivates your audience but also provides a clear and compelling return on your investment.

UK Success Stories in Artificial Reality Games

It's one thing to talk about theory and process, but it’s the real-world examples that really show what artificial reality games can do. The UK is a serious hub for this creative technology, and the market numbers back that up. The UK's virtual reality gaming sector alone hit USD 1,376.2 million in 2024, and it's projected to soar to USD 4,081.1 million by 2030. You can see the full breakdown in the research about UK's VR gaming market growth. This isn't just a passing trend; it shows a real, growing appetite for high-quality immersive experiences. At Studio Liddell, a VR production studio with a base in Manchester, we've been right in the thick of it, helping our clients turn ambitious ideas into engaging, tangible results. These mini case studies give you a glimpse into how we use immersive tech to solve real business challenges, from entertaining huge crowds to making sense of seriously complex data.

High-Throughput Entertainment for Events

When it comes to events, the biggest headache is often how to manage large crowds while still giving every single person a memorable, fun experience. That was the exact problem we tackled with 'Dance! Dance! Dance!', a high-throughput mixed reality event game we designed from the ground up for busy event spaces. The game is simple, fast-paced, and brilliant for drawing a crowd. Players jump onto a stage and see themselves on a massive screen, challenged to copy the moves of a digital avatar. We built the tech to be rock-solid, ensuring it could run all day with minimal fuss and keep a constant flow of people moving through. It’s a perfect showcase of how we engineer an artificial reality game for both peak engagement and operational smoothness, a key consideration for any location-based VR.

Award-Winning Creative Storytelling

Of course, it's not all about high-energy games. We also love diving deep into narrative-driven experiences. Our award-winning VR short film, 'Aurora', is a project we’re incredibly proud of, and it really shows our team's talent for world-class creative storytelling and technical skill.

This project took everything we had, covering the entire VR short film production pipeline from the initial script and performance capture right through to complex spatial sound design. 'Aurora' is proof that VR can be an incredibly powerful tool for creating an emotional connection, pulling audiences into a meticulously crafted world that feels both huge and deeply personal.

This kind of project is a great example of our ability to fuse artistic vision with technical precision, which is absolutely essential for any top-tier artificial reality game. If you're looking for a partner in this space, it pays to know who the main players are. You might find our rundown of the top 7 virtual reality development companies in the UK helpful.

Translating Complexity into Clarity

Not every artificial reality game needs to be set in a fantasy world or involve non-stop action. In fact, one of the most powerful uses for this technology is taking complicated information and making it easy for anyone to understand. Our work on the 'GeoEnergy NI' project is a fantastic technical animation case study for this. The mission here was to explain very complex geological and energy data to stakeholders in a way that was both clear and compelling. We did this by creating interactive visualisations that let users get hands-on and explore the data for themselves.

- •Visual Strategy: We came up with a unique visual style that turned abstract data points into 3D models you could actually understand.

- •User Interaction: People could spin the models around and look at the information from different angles, which helped them build a much deeper understanding.

- •Stakeholder Impact: The final experience communicated these complex ideas so successfully that it helped secure stakeholder buy-in far more effectively than a slide deck ever could.

This project proves that a key ingredient for effective VR training and B2B communication is the ability to turn complexity into clarity, a skill we bring to every project we take on.

Your Questions About ARG Production Answered

Jumping into the world of artificial reality games can feel like a big move, often bringing up questions around budgets, timelines, and what you actually need to get the ball rolling. We get it. This final section gives you practical, straightforward answers to the questions we hear most often from producers and brand managers, helping you take the next step with confidence.

How Much Does It Cost to Develop an Artificial Reality Game?

This is nearly always the first question, and the honest answer is: it depends entirely on the ambition of the project. Putting a price on an artificial reality game is a bit like pricing a house; a simple one-bedroom bungalow comes with a very different price tag than a sprawling, multi-storey mansion. The final figure is shaped by the scale of your vision, from motion graphics logo stings to full-blown series animation. For instance, a simple branded AR filter for a social media campaign might only cost a few thousand pounds. On the other end of the spectrum, a complex, high-fidelity VR training simulation for a specialist enterprise application could easily be a six-figure investment. The key factors that influence the cost include:

- •Visual Fidelity: Are you aiming for hyper-realistic graphics, or would a more stylised look better serve your goals?

- •Interaction Complexity: How will the user interact with the world? Are we talking simple button presses or complex, physics-based object manipulation?

- •Platform Choice: Deploying to mobile AR is a world away from building for high-end, PC-tethered VR headsets like the Valve Index.

- •Overall Scope: The length of the experience, the number of unique environments, and the sheer volume of content all play a huge part.

The best way to get a firm cost is by starting with a discovery workshop. This process lets us work with you to define the project's exact scope and goals, which allows our team to provide a transparent, detailed budget that’s built around your investment and objectives.

What Is a Typical Timeline for an ARG Project?

Just like the budget, project timelines are tied directly to the complexity of the build. A focused AR application, for example, could be designed, developed, and launched in as little as 8-12 weeks. This would be for an experience with a very clear and contained scope. A more involved project, like a comprehensive VR training module or a location-based entertainment experience, will naturally need more time. These can take anywhere from 4 to 9 months, or sometimes even longer, depending on the depth of the content and the technical hurdles we need to clear. We always break our production process down into clear, manageable phases, so you have full visibility at every stage:

- Pre-Production (2-6 weeks): This is the blueprint phase. We define the concept, create storyboards and pre-visualisations, and map out the entire user journey.

- Production (6-20+ weeks): This is the core build. Our artists create the 3D assets while our developers build the experience in Unity or Unreal Engine.

- Post-Production (2-4 weeks): The final stage involves rigorous QA testing, performance optimisation, and getting the application ready for deployment on its target platforms.

Each phase ends with key milestones and review points. This structured approach means there are no nasty surprises, and we can be sure the project stays on track to hit your critical launch deadlines.

What Information Do We Need to Get Started?

You really don't need to arrive with a fully-formed technical specification to have a conversation with us. In fact, some of our most successful projects have grown from a simple "what if...?" idea. The single most valuable thing you can bring to the first meeting is a clear business goal. What are you ultimately trying to achieve?

- •Are you looking to increase sales at an upcoming exhibition?

- •Do you need to improve training outcomes and track compliance?

- •Is the main goal to create a standout marketing moment that builds real brand loyalty?

Knowing who your target audience is and having a rough idea of your available budget is also a fantastic starting point. From there, our team can help you shape the idea. We specialise in guiding clients through this initial phase, helping translate a core business objective into a creative and technical brief that works. A "production scoping call" or a more in-depth "discovery workshop" is the perfect first step. It’s a no-obligation chat where we can explore the possibilities together and co-create a brief that will set your project up for success.

Ready to explore how an artificial reality game could transform your business? The team at Studio Liddell, a UK animation studio with a broadcast pedigree since 1996, is here to help you navigate every step, from initial idea to final launch. Book a production scoping call to start the conversation today.